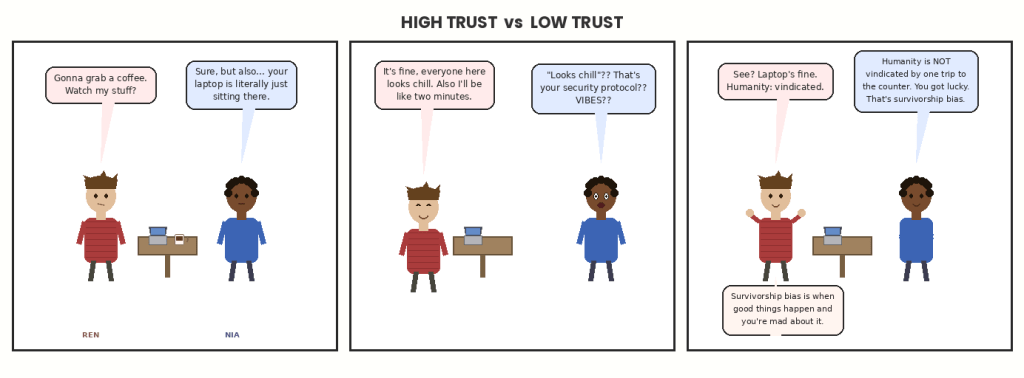

Two operating systems for handling strangers, sandwiches, and the social contract

There is a binary that cuts through nearly every social arrangement we have: whether you assume goodwill or verify compliance. Whether you treat defection as a noise term or a signal. Whether the default is “trust until proven otherwise” or “trust after verification.” This is not exactly a personality difference, though it correlates with personality. It’s more like an operating system preference: some people run High Trust, some people run Low Trust, and the remarkable thing is how consistently the preference cascades into everything else.

* * *

The classic thought experiment is the unlabeled food in a shared office fridge. The High Trust move is to put your lunch in without marking it and assume your coworkers will not steal it. The Low Trust move is to write your name on it, maybe add a date, possibly attach a passive-aggressive note. Both are solving the same coordination problem. Both are attempting to protect the same resource. But they have different priors about how the world works, and the world, being made mostly of mirrors, tends to prove both of them correct.

The High Trust person says: most people are basically decent, and the transaction cost of creating and enforcing a labeling system is higher than the occasional sandwich loss. The Low Trust person says: some people will absolutely steal your lunch if you don’t mark it, and the transaction cost of replacing lunch is higher than the cost of a label. Both are empirically defensible. What makes this interesting is that the two orientations create different environments, which then generate different evidence, which then reinforce the original orientation. It’s a loop, not a line.

Here’s how the loop works. If you mark your lunch, you signal that you expect defection. That signal is information. It tells potential lunch thieves that they live in a Low Trust environment, which might make them more likely to defect when they wouldn’t have otherwise, since clearly everyone else is also operating on defection logic. If you don’t mark your lunch, you signal that you expect cooperation. This might make someone less likely to steal it, because taking unmarked food feels more like theft than taking marked food that you “forgot” wasn’t yours. Or it might make them more likely to steal it, since you’ve indicated you won’t enforce consequences. Both outcomes are possible. The environment you’re in determines which dominates.

This makes High Trust and Low Trust self-reinforcing in practice, but not symmetrically. High Trust equilibria are fragile. They require most people to cooperate most of the time, and a single visible defector can cascade into a norm shift. Low Trust equilibria are robust. They assume defection and build accordingly. Once you’re in a Low Trust equilibrium, getting out requires coordinated trust-building that feels unsafe to everyone involved. You have to collectively stop verifying, which is terrifying if you’re not sure the other parties will also stop verifying. Mutual disarmament, but for social norms.

The literature on this is enormous and touches everything from game theory to institutional economics to anthropology. Axelrod’s tournament on iterated Prisoner’s Dilemma, where “tit-for-tat” (cooperate first, then mirror your opponent) outperforms both pure cooperation and pure defection. Ostrom’s work on commons management, where small communities can self-regulate shared resources if they meet a bunch of very specific conditions, most of which amount to “the people involved know and will keep interacting with each other.” The famous “broken windows” theory in criminology, which claims that visible signs of disorder (broken windows, graffiti) signal that the environment is Low Trust, which increases disorder. That one’s contested empirically, but the underlying model — that trust environments are contagious — is not.

One of the clearest places to watch this binary in action is small-scale lending. Microfinance institutions in the developing world discovered that group lending (where a small circle of borrowers collectively guarantee each other’s loans) has dramatically lower default rates than individual lending, even when the group members have no legal liability for each other’s debts. The mechanism is pure High Trust infrastructure: the borrowers know each other, they have ongoing relationships, and defaulting means social consequences within the group. You cannot port this model to anonymous urban lending, because the entire enforcement mechanism depends on High Trust’s invisible substrate. The contract is not what’s doing the work. The relationship is.

Here’s the thing that makes this binary genuinely difficult: both approaches have pathologies, and both pathologies are about mistiming. High Trust applied to an environment that actually requires Low Trust gets you exploited. Low Trust applied to an environment that would support High Trust gets you isolated. And because environments can shift — because a High Trust environment can become Low Trust if enough people defect, or a Low Trust environment can stabilize into High Trust if enforcement becomes reliable — you can be correct at time T and incorrect at time T+1 while doing exactly the same thing.

This explains part of why intergenerational arguments about this are so bitter. The parent who grew up in a High Trust environment (small town, everyone knew everyone, reputation mattered immensely) teaches their kid High Trust norms. The kid grows up in a Low Trust environment (city, anonymity, transactional relationships) and gets burned. The parent sees this and thinks the kid is doing it wrong. The kid sees this and thinks the parent is out of touch. They are both correct about their own context. Neither can quite believe the other’s context is real.

The policy version of this is brutal. Almost every major debate about institutional design is a High Trust vs Low Trust argument. Should police require warrants to search, or should we trust them to use discretion? Should teachers be monitored for effectiveness, or should we trust the profession to self-regulate? Should political candidates be required to disclose financials, or should we trust voters to care about character? These are not debates about facts. They’re debates about priors, and your prior is usually set by which failure mode you’ve personally experienced.

There’s a specific mechanism worth naming here: verification crowding out trust. This is when the act of adding a Low Trust mechanism into a High Trust environment destroys the trust it was meant to protect. The classic study is daycare centers in Israel that started fining parents for picking up children late. Economics 101 says this should reduce late pickups: you’ve made defection costly. What actually happened is late pickups increased. The fine converted the implicit social obligation (“I’m inconveniencing these teachers, I should feel guilty”) into an explicit price (“I can just pay for the extra time”). The Low Trust mechanism destroyed the High Trust mechanism it was meant to supplement.

Imagine: a daycare center that adds a fine for parents who pick up children late. The fines increase late pickups, because the fine converts the implicit social obligation (“I’m inconveniencing these teachers, I should feel guilty”) into an explicit price (“I can just pay for the extra time”). The Low Trust mechanism destroys the High Trust mechanism it was meant to supplement.

You can watch this same dynamic play out in organizational management. A company that monitors employee keystrokes and bathroom breaks will reliably get worse output from the same people who would have worked harder under less surveillance. The surveillance doesn’t just fail to increase productivity. It signals to employees that their intrinsic motivation is not trusted, which is information they act on. Meanwhile, the company read this as evidence that the employees needed to be monitored in the first place.

Both sides of this loop are correct about something. Employees will slack without any oversight, if oversight is otherwise available and the work otherwise invisible. Oversight does crowd out intrinsic motivation. These are both true. The question is which effect dominates in your particular context, and the answer is not universal.

One of the deepest cuts of this binary is geographic. There is compelling cross-national research suggesting that social trust (measured as “do you believe strangers are generally honest”) correlates with economic development, low corruption, functioning civic institutions, and various other good things. The causality almost certainly runs in all directions simultaneously: High Trust makes institutions work better, which makes trust more warranted, which makes trust more common, which gives institutions more slack, and so on upward. Conversely, Low Trust cultures can get stuck in equilibria where institutions are corrupt because no one trusts them enough to resist defecting, which makes the institutions more corrupt, which makes distrust more rational.

This gets brought up a lot in discussions about immigration, development economics, and (catastrophically, inevitably) various forms of ethnic nationalism that I’m not going to litigate here. The finding itself is real. The conclusions people draw from it vary enormously in their quality.

The thing that gets underweighted in these discussions is how rapidly trust levels can shift with context. The same person who trusts everyone in their small hometown extends that trust only to a much smaller circle in an anonymous city. The same person who locks their car in one neighborhood leaves it unlocked in another. Trust is not a fixed character trait. It’s a model, constantly updated on evidence.

This means that “High Trust culture” and “Low Trust culture” are really shorthand for “this environment has historically produced enough positive interactions to support a High Trust equilibrium” and “this environment has historically produced enough defections to make a Low Trust equilibrium adaptive.” Which means the cultures can change, much faster than the “deep cultural values” framing usually implies, if the underlying environment changes.

Post-Soviet Eastern Europe is the classic case study here. Societies that spent decades under systems where informal cooperation was dangerous (neighbors reported neighbors, the state monitored everything, trust was actively weaponized against you) emerged with measurably lower social trust than comparable societies that didn’t have the same experience. This wasn’t an ethnic or character difference. It was a rational adaptation to a specific set of incentives, and it persisted for a generation or two after the incentives changed, exactly as you’d expect from an updating model with memory.

The deep-cut example I can’t resist: the MMORPG Eve Online, which is famous for being the most Low Trust game environment ever deliberately designed. The game’s developers made almost everything potentially stealable, scammable, and betrayable. The in-game economy runs on contracts that can be defaulted on. Corporations (guilds) can be infiltrated and looted from the inside. The developers explicitly built an environment where Low Trust is not paranoia but correct risk management.

What emerged from this was extraordinary: a fully functioning Low Trust economy with extraordinarily sophisticated insurance markets, reputation systems, contract-enforcement guilds, and arbitration mechanisms. High Trust behavior still exists in Eve. But it’s been priced, packaged, and bounded by explicit verification systems. The game is basically a running proof of concept that Low Trust isn’t nihilism; it’s just a different architecture for coordination.

What Eve doesn’t model well is the cost of building and maintaining all that verification infrastructure. Every scam you have to check for is friction. Every contract you have to legally structure is time. Societies don’t choose between High and Low Trust the way game designers do. They inherit a historical distribution and try to manage it.

Here are five examples of this binary at work that haven’t come up yet:

- Academic citation culture: High Trust fields assume citations are accurate without checking. Low Trust fields (and subfields after high-profile fraud cases) require independent replication before results carry real weight. The same piece of evidence means something different depending on which norm governs the community receiving it.

- Restaurant tipping in the US vs service-included countries: Tipping is a Low Trust mechanism that makes payment contingent on verified service quality. Service-included pricing is High Trust in a specific way: it trusts that institutional incentives (reputation, hiring, management) will produce good service without direct customer control over compensation. Neither system produces universally better service. They produce different incentive structures with different failure modes.

- Potluck dinners: The classic High Trust social event. You’ve been told what to bring, you’re trusted to bring it, and the meal only works if almost everyone shows up having actually done so. Societies that don’t do potlucks, or who bring the same dish every time regardless of what was requested, are often describing something real about their underlying trust architecture, not just a different culinary preference.

- Open-source software licenses: The spectrum from MIT (“do whatever you want, we trust you”) to GPL (“you can use this but you must also share your changes, enforced by copyright law”) is almost entirely a High Trust vs Low Trust argument about whether goodwill or legal mechanism better maintains community contribution norms. Both have produced enormous software. The argument has been running for thirty years without resolution.

- Anonymous peer review: Science’s solution to a trust problem. Reviewers can’t be bought or intimidated if authors don’t know who they are. Authors can’t be discriminated against on identity if reviewers don’t know who they are. Double-blind review is a Low Trust mechanism designed to make High Trust outcomes more reliable. The fact that it’s not universally adopted is itself a piece of evidence about different communities’ assessment of which failure mode is worse.

It’s worth sitting with how differently the two orientations experience the same interaction. A Low Trust person who is never cheated takes that as evidence that their caution is working. A High Trust person observing the same Low Trust person sees someone who has spent enormous energy on caution and received nothing in return. Both readings are technically consistent with the data. Neither can convince the other without changing the underlying model.

The frustrating and interesting thing is that both are also right about different time horizons. High Trust investments pay off slowly and can be wiped out quickly. You build trust over years and lose it in a week. Low Trust investments are expensive immediately and pay off in robustness to bad actors. If you never meet a bad actor, you’ve wasted your money. If you do, you’re extremely glad you paid.

This means that which orientation is “correct” depends heavily on your base rate for defection, your ability to recover from a betrayal, your time discount rate, and whether you’re dealing with a one-shot interaction or a repeated game. Strangers in cities: probably Low Trust is adaptive. Neighbors you’ve lived next to for a decade: probably High Trust is adaptive. The same person correctly holds both simultaneously and switches between them almost automatically. What we call “cultural trust level” is really a description of where someone has drawn the default line in ambiguous cases.

The ideal, if we’re going to say that word, is not simply “more trust” or “less trust.” It’s a person who can read which context they’re in and deploy the appropriate tool. The High Trust person who can’t update when they’ve been genuinely betrayed will be exploited indefinitely. The Low Trust person who can’t switch to High Trust when the environment warrants it will pay transaction costs forever and never build the deep cooperative relationships that compound over time.

A saw is not better than a hammer. But using a saw when you need a hammer is a category error, and the category error has costs.

What we are really describing, when we draw this line between High Trust and Low Trust, is two different theories of the human animal’s default state, and both theories are old enough to have acquired the weight of metaphysics. One says: the natural condition of persons, absent corruption, is cooperation. The other says: the natural condition of persons, absent constraint, is opportunism. Pelagius and Augustine. Rousseau and Hobbes. The noble savage and the nasty, brutish, short. These are not opposite conclusions from the same evidence. They are different visions of what the evidence is for.

Neither is wrong. Neither is complete. The person who believes in natural cooperativeness and the person who believes in natural defection are both looking at the same primate with the same brain, and both have selected their evidence set correctly. We are both. The question is always which mode gets triggered by the specific stimulus, and what you build to channel that.

There is a form of High Trust so total that it becomes a kind of sacred sensibility, a way of treating the other person’s unknown interiority as fundamentally good until proven otherwise, not because the evidence compels it but because the alternative is to live inside a lock. Medieval guild systems ran partly on this; small credit cooperatives in West Africa run partly on this still. The debt that binds is not legal but moral, and the moral debt is enforced by something older and less articulable than a court. Call it the social soul, the egregore of a community, the fact that human beings are intensely social animals whose reputational tracking systems were honed over hundreds of thousands of years and operate at speeds courts cannot approach. When High Trust works, it works because this older enforcement system is firing correctly. When it fails, it fails because that system has been disrupted: by anonymity, by scale, by the specific corrosive effects of wealth differentials on social obligation.

And Low Trust has its own theology, just as old. The contract, the double-entry ledger, the specification of terms, the written record that does not depend on anyone’s memory or goodwill: these are not modern inventions but among the oldest human technologies. Writing may have been invented as a trust mechanism, to record grain quantities across a harvest that no single mind could hold. The Mesopotamian merchants who sealed clay tablets with their cylinder seals were Low Trust in a very specific and sophisticated way: they were encoding the terms of cooperation into a medium that survived the death of the relationship. Low Trust, at its best, is a kind of respect. It says: the arrangement between us is real and it should be able to outlast us, and neither of us should have to depend on the other’s benevolence.

What neither theology can fully admit is that the other is necessary. The contract only means anything if the parties intend to honor it, and that intention is not itself in the contract. The handshake only binds if both parties share enough world to know what it means to bind. There is always, irreducibly, a layer of unverifiable trust underneath any verification system. The bank checks your credit score, and then trusts that the model captures something real. The friend trusts you completely, and builds that trust on years of small observed behaviors that are themselves uncontractable. The two systems are not competing. They are different layers of the same structure, and the one that’s invisible in any given moment is usually the one doing the most work.

Lean on this. Every institution you have ever trusted is resting on a substrate of interpersonal trust that was never formalized, would collapse if you formalized it, and that the institution cannot replace if it breaks. Every relationship you have ever trusted is leaning against a wall of institutional guarantees that you forgot were there. The wall and the window are separate things but they are holding up the same house.

Which means the question is not which one you are, fundamentally, as a type. The question is which one you have forgotten you are depending on.

That is always the question. And neither the High Trust person nor the Low Trust person has a satisfying answer, which is why the argument is still running, and which is also, in some part, why the house is still standing.

The Fridge

A dialogue on High Trust vs Low Trust, featuring Vyacheslav Breaker and Bartuk Codex arguing about communal kitchen policy

The breakroom of a shared office. A small refrigerator hums against the wall. Bartuk is taping a handwritten label — “BARTUK. TUESDAY. DO NOT TOUCH.” — onto a container of leftover lamb stew. Vyacheslav enters, holding an unlabeled brown bag, which he places in the fridge without ceremony.

BARTUK: You’re not going to label that.

VYACHESLAV: Why would I label it?

BARTUK: Because there are eleven people who use this fridge and at least three of them have stolen my food in the last calendar year.

VYACHESLAV: “Stolen” is interesting. Were they hungry?

BARTUK: That is not the relevant variable.

VYACHESLAV: It’s a little bit the relevant variable. If someone eats my lunch, probably they needed lunch. I can go get another one. The system worked. Food existed, a hungry person found it. Wonderful.

BARTUK: The system “worked” in the same way that a bank robbery “works” if you happen to be the robber. You brought a lunch. You prepared it. You allocated time and resources. Someone else consumed the output of that labor without negotiation or compensation. That is not a system working. That is a system failing in a way that benefits the person with the least scruples.

VYACHESLAV: Bartuk, you are describing a sandwich.

BARTUK: I am describing a sandwich and the principle behind the sandwich.

VYACHESLAV: You know what I’ve noticed? In the three years I’ve been putting unlabeled food in this fridge, I’ve lost maybe four meals. Four. And in that same period, I have spent exactly zero minutes writing labels, zero minutes checking if my food has been touched, zero minutes composing passive-aggressive notes, and zero minutes resenting my coworkers. If I factor in the time savings alone, I’m running a surplus.

BARTUK: You are subsidizing theft and calling it efficiency.

VYACHESLAV: I’m calling it a rounding error, which is what it is. Four sandwiches in three years. I’ve lost more food to forgetting it was in there.

BARTUK: And the person who ate those four sandwiches? They learned that your food is available. The rate will increase. You have trained an organism to exploit you, and you are proud of this.

VYACHESLAV: Or I’ve trained an organism to feel a small, warm sense of goodwill toward me, and the next time I need something from them, there’s a little bit of unspoken social credit sitting there. I didn’t buy that. I didn’t negotiate for it. It just exists, because I wasn’t keeping score.

BARTUK: You don’t know that. You’re inventing a narrative where generosity pays invisible dividends. Maybe it does. Maybe the person who ate your sandwich thinks you’re a pushover and would never help you move a couch.

VYACHESLAV: Honestly? If I needed help moving a couch, I would simply ask the room, and someone would help me, because that is what people do.

BARTUK: You live in a fantasy.

VYACHESLAV: I live in a very comfortable fantasy where I don’t carry a label maker to work.

Bartuk finishes applying a second label to the lid of his container. This one reads “CAMERAS EXIST.”

VYACHESLAV: There are no cameras in here.

BARTUK: They don’t know that.

VYACHESLAV: Bartuk.

BARTUK: What? It’s a deterrent. Deterrents work. That is the entire basis of civilization. You think the social contract is people holding hands and skipping toward mutual flourishing? The social contract is “there are consequences.” My label communicates consequences.

VYACHESLAV: Your label communicates that you are a man who brought lamb stew to work and is frightened of his coworkers.

BARTUK: I am not frightened. I am calibrated. There’s a difference. A frightened man doesn’t bring stew at all. I bring the stew. I label the stew. The stew is protected. I eat the stew. Every step in the process is accounted for. Nothing is left to chance or to the goodness of people’s hearts, which, as I have repeatedly documented—

VYACHESLAV: You documented it?

BARTUK: I keep a ledger.

VYACHESLAV: Of stolen office food.

BARTUK: Of losses, yes. With dates. And suspected parties.

VYACHESLAV: And what does the ledger tell you?

BARTUK: That since I started labeling, losses have dropped to zero. Zero. Which means the labels work. Which means the problem was real. Which means your approach of cheerful indifference is subsidizing exactly the behavior that my labels have eliminated. You’re welcome, by the way. My labels are protecting your lunch too.

VYACHESLAV: That’s genuinely possible. I hadn’t considered that.

BARTUK: You hadn’t— you hadn’t considered—

VYACHESLAV: I don’t spend a lot of time considering the fridge. That’s sort of my whole point.

A silence. Bartuk stares at Vyacheslav. Vyacheslav smiles pleasantly.

BARTUK: Let me ask you something. If I stopped labeling. If everyone in this office stopped labeling. And losses went up. Significantly. Would you change your approach?

VYACHESLAV: Probably not. I’d just bring cheaper lunches.

BARTUK: You would adjust your input to accommodate their theft rather than address the theft itself.

VYACHESLAV: I would adjust my input to minimize my total hassle, yes. The theft costs me three dollars. The hassle of preventing the theft costs me something I value more, which is not having to think about it.

BARTUK: You have priced your own dignity below a sandwich.

VYACHESLAV: No, I’ve priced my peace of mind above a sandwich. You’ve done the reverse.

Another silence. Bartuk opens the fridge. Vyacheslav’s unlabeled bag has already been pushed to the back by someone else’s Tupperware. Bartuk’s container, bristling with labels, sits front and center, unmolested, sovereign.

BARTUK: Mine is still there.

VYACHESLAV: So is mine.

BARTUK: For now.

VYACHESLAV: For now is all we ever have, Bartuk. Do you want some of my crackers? I brought too many.

Bartuk takes two crackers. He does not say thank you. He does, however, bring Vyacheslav a coffee later that afternoon, without being asked, and without recording it in the ledger.

Leave a comment